Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

The year 2026 has officially marked the “Point of No Return” for traditional cinematography. We are no longer debating whether AI can create videos; we are now choosing which AI Video Stack defines a professional studio’s workflow.

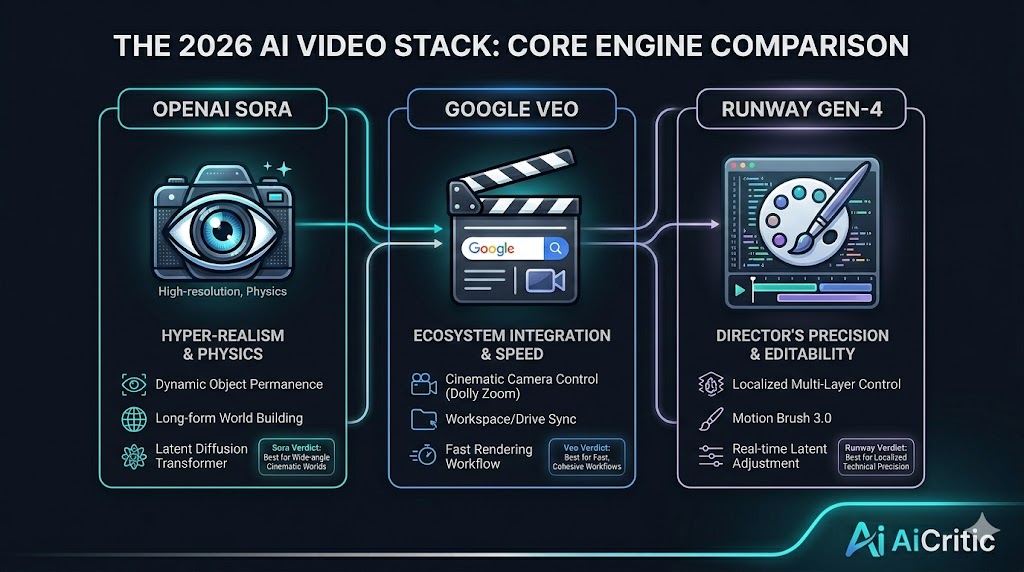

At AiCritic, we have spent the last quarter stress-testing the industry’s titans: OpenAI’s Sora, Google’s Veo, and Runway’s Gen-4 framework. For professional filmmakers, the choice isn’t just about resolution it’s about temporal consistency, physics-engine accuracy, and director-level control.

In the early days of 2024, AI video was often criticized for “melting” limbs and distorted backgrounds. Fast forward to 2026, and the shift toward Latent Diffusion Transformers has solved the consistency problem.

Professional filmmaking now requires a “Stack” approach. Most studios aren’t using just one tool; they are using a combination of tools for different stages of production. But if you had to choose a primary engine, where should you invest?

Sora remains the gold standard for “World Modeling.” What sets Sora apart in 2026 is its ability to understand complex physics.

Key Feature: Dynamic Object Permanence. If a character walks behind a tree in a 60-second Sora clip, they emerge on the other side with the exact same facial features and clothing.

Best For: Long-form cinematic shots and environment building.

The AiCritic Verdict: Sora is unparalleled for wide-angle “world-building” shots, but its rendering time remains higher than its competitors.

Google’s Veo has become the favorite for commercial directors. Why? Because it lives inside the Google Workspace Ecosystem.

Key Feature: Cinematic Control. Veo allows users to specify camera movements (pan, tilt, zoom) using professional terminology like “Dolly Zoom” or “Rack Focus” with 99% accuracy.

Integration: In 2026, Veo’s ability to pull assets directly from Google Drive and sync with YouTube’s native AI editing tools makes it the fastest workflow for content creators.

The AiCritic Verdict: If your goal is speed and professional camera control, Veo wins.

While Sora and Veo focus on generation, Runway has pivoted toward Editability. Runway’s “Motion Brush 3.0” is the most advanced tool for localized motion control in 2026.

Key Feature: Multi-Layer Control. You can generate a scene and then tell Runway to only change the lighting on the actor’s face without regenerating the entire background.

Innovation: Their “Director Mode” allows for real-time adjustments to the AI latent space.

The AiCritic Verdict: Runway is for the “Control Freak” filmmaker who needs every pixel to be perfect.

| Feature | OpenAI Sora | Google Veo | Runway Gen-4 |

| Max Clip Duration | 120 Seconds | 60 Seconds | 30 Seconds (Infinite Loop) |

| Physics Accuracy | 9.5/10 | 8.5/10 | 8.0/10 |

| Rendering Speed | Slow (High Compute) | Fast | Real-time (Draft Mode) |

| Editing Control | Prompt-based | Camera-based | Layer-based |

To maintain a Premium Studio Workflow, we recommend the following setup:

Base Generation: Use Sora for your establishing shots and complex environments.

Character Continuity: Use Runway to refine specific movements and facial expressions.

Final Polish: Use Veo for cinematic camera movements and color grading integration.

As we mentioned in our guide on AI Automation Workflows, integrating these tools into a single pipeline is the only way to scale your production.

At AiCritic, we believe the “Human-in-the-loop” is more important than ever. While these tools can generate 8K footage, they cannot generate Emotion. The best filmmakers in 2026 are using AI as a “Camera,” not as a “Director.”

The choice between Sora, Veo, and Runway depends on your specific needs. If you want raw realism, go for Sora. If you want seamless workflow, Veo is the answer. For precision editing, Runway remains the champion.

The 2026 AI Video Stack is not about replacing the camera it’s about expanding the imagination.